- cross-posted to:

- technology@lemmy.zip

- cross-posted to:

- technology@lemmy.zip

Archive: https://archive.is/lP0lT

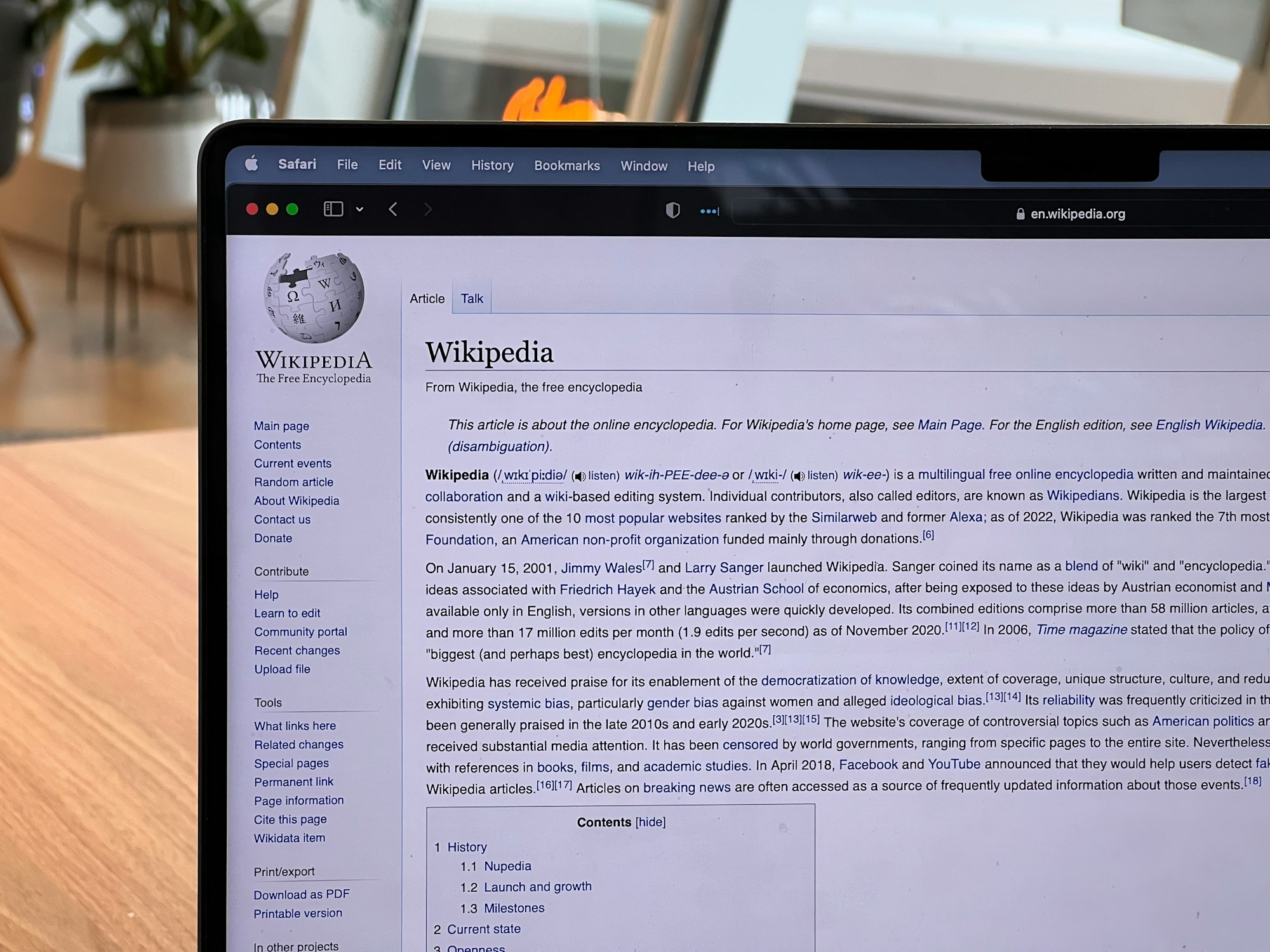

Wikipedia, is becoming one of few places I trust the information.

It’s funny that MAGA and ml tankies both think that Wikipedia is the devil.

There’s a lot of problems with Wikipedia, but in my years editing there (I’m extended protected rank), I’ve come to terms that it’s about as good as it can be.

In all but one edit war, the better sourced team came out on top. Source quality discussion is also quite good. There’s a problem with positive/negative tone in articles, and sometimes articles get away with bad sourcing before someone can correct it, but this is about as good as any information hub can get.

Thank you for your service 🫡

I remeber an article form a decade or more ago which did some research and said that basically, yes there are inaccuracies on Wikipedia, and yes there are over-simplifications, but** no more than in any other encyclopaedia**. They argued that this meant that it should be considered equally valid as an academic resource.

Any chance you remember what that one edit war was about?

It was about whether Bitcoin Cash was referred to as “Bcash” or not.

I forget the semantics, but there were a lot of sources calling it Bcash, but then there were equally reliable sources saying that was only the name given by detractors. The war was something about how Bcash should be referenced in the opening paragraph

Thank you very much!

I’m glad it was at least about something fairly trivial.

There was a hilarious one in the human anus article, about the picture being used in said article

And don’t forget the British-American bias. Hopefully the automated translation and adaptation that is being pursued by wikipedia helps to improve it.

I remember in the past few years that I’ve had to switch to non-American or non-British versions of Wikipedia just in order to find the answer I was looking for.

We need to remind Americans and Britains that knowledge on Wikipedia doesn’t stop with their languages. We need to do a better job of gathering knowledge from non-English sources and translating those into English. Same goes vice versa for English sources and pages into languages that other people can understand.

There’s still a lot of work to be done with Wikipedia to make it truly a universal knowledge repository. But it is one of the best we have

It’s worth checking out the contribs and talk regarding articles that can be divisive. People acting with ulterior motives and inserting their own bias are fairly common. They also make regular corrections for this reason. I still place more faith and trust in Wikipedia as an info source more than most news articles.

Wikipedia has an imperfect process, but it is open to review and you can see how the sausage is made. It isn’t perfect, but the best we have.

Luv u Wiki

MAGA and tankies are pretty much the same except MAGA votes while tankies whine.

Red hat vs red coat fascists

So very much on-script though

Tankies don’t think Wikipedia is the devil. You could call me a tankie from my political views, and I very much appreciate Wikipedia and use it on a daily basis. That is not to say it should be used uncritically and unaware of its biases.

Because of the way Wikipedia works, it requires sourcing claims with references, which is a good thing. The problem comes when you have an overwhelming majority of available references in one topic being heavily biased in one particular direction for whatever reason.

For example, when doing research on geopolitically charged topics, you may expect an intrinsic bias in the source availability. Say you go to China and create an open encyclopedia, Wikipedia style, and make an article about the Tiananmen Square events. You may expect that, if the encyclopedia is primarily edited by Chinese users using Chinese language sources, given the bias in the availability of said sources, the article will end up portraying the bias that the sources suffer from.

This is the criticism of tankies towards Wikipedia: in geopolitically charged topics, western sources are quick to unite. We saw it with the genocide in Palestine, where most media regardless of supposed ideological allegiance was reporting on the “both sides are bad” style at best, and outright Israeli propaganda at worst.

So, the point is not to hate on Wikipedia, Wikipedia is as good as an open encyclopedia edited by random people can get. The problem is that if you don’t specifically incorporate filters to compensate for the ideological bias present in the demographic cohort of editors (white, young males of English-speaking countries) and their sources, you will end up with a similar bias in your open encyclopedia. This is why us tankies say that Wikipedia isn’t really that reliable when it comes to, e.g., the eastern block or socialist history.

One would think that leftists, socialists, communists, tankies, and/or others would come up with supplementary wikis such as Conservapedia or RationalWiki that are good.

and, FWIW:

Category:Wikidebates

https://en.wikiversity.org/wiki/Category:Wikidebates

e.g.

Is capitalism sustainable?

https://en.wikiversity.org/wiki/Is_capitalism_sustainable%3F

It’s sad how little news there is relatively little news in Wikinews ( https://en.wikinews.org/wiki/Main_Page ).

supplementary wikis

We have them, e.g. ProleWiki, but good luck trying to explain to the average western Wikipedia user that for certain geopolitical topics they might be worth checking out and contrasted with Wikipedia. My problem isn’t the lack of alternatives, my problem is the anticommunist and pro-western bias in Wikipedia, the most used encyclopedia, in geopolitically charged topics.

Hmmm,

Let’s see:

Wikipedia is an imperialist propaganda outlet and disinformation website presenting itself as an encyclopedia launched in 2001 by bourgeois libertarians Jimmy Wales and Larry Sanger. Wikipedia is maintained by a predominantly white male population, of which about 1% are responsible for 80% of edits. It has also been linked to corporate and governmental manipulation and imperialist agendas, including the U.S. State Department, World Bank,[1] FBI, CIA, and New York Police Department.[2][3]

Wow. 😁🙂

and while I’m at it:

Wikipedia, is an online wiki-based encyclopedia hosted and owned by the non-profit organization Wikimedia Foundation and financially supported by grants from left-leaning foundations plus an aggressive annual online fundraising drive.[1] Big Pharma pushes its agenda and profits by paying anonymous editors to smear its opponents there, while others are moronic internet trolls who include teenagers and the unemployed.[2] As such, it projects a liberal—and, in some cases, even socialist, Communist, and Nazi-sympathizing—worldview, which is totally at odds with conservative reality and rationality.[3]

pw:Communist Party of Peru – Shining Path

The party organized its own militia, the People’s Guerrilla Army and claimed to have begun a protracted people’s war against the bourgeois government of Peru since 1980, with the intention of establishing a dictatorship of the proletariat.[1] Throughout its period of highest activity, the party frequently engaged in terrorist tactics, and has committed brutal and violent attacks on peasants, including children.[2] The class composition of the party consisted in mostly petty-bourgeois intellectuals, and the growth of the party was closely linked with student movements in universities.[3]

My problem isn’t the lack of alternatives, my problem is the anticommunist and pro-western bias in Wikipedia, the most used encyclopedia, in geopolitically charged topics.

and I suppose the supplements are a way, however their effectiveness/ineffectiveness.

You may disagree with the first statement on being an imperialist propaganda outlet, but the rest of information is relevant.

I don’t get your point of posting the article on the Shining Path, though

That instance is fucking bananas

They are scared of facts.

The site engages in holocaust denial, apologia for wehrmacht, and directly collaborates with western governments. On the talk pages users will earnestly tell you that mentioning napalm can stick to objects when submerged in water constitutes “unnecessary POV”, and third-degree burns are painless because they destroy nerve tissue (don’t ask how the tissue got destroyed, and they will not be banned for this so get used to it). Jimmy Wales is a far-right libertarian. It might be a reliable source of information for reinforcing your own worldview, but it’s not a project to create the world’s encyclopedia. Something like that would at least be less stingy about what a “notable sandwich” is.

Citation needed.

Removed by mod

Removed by mod

Removed by mod

Removed by mod

Removed by mod

Show me. That’s a simple request.

As a Wikipedia editor I can comfirm - we regularly say that napalm sticking to objects in water is POV. I do it at least twice a week. I’ll try making a bot to do it automatically so I’ll have more time for holocaust denial.

I have been editing Wikipedia since 2004, and my very first edit was to deny a clearly POV edit to a sticky napalm article. It’s kind of a point of pride for me.

As a fellow Wikipedia editor I have confirmed that you are in fact the intern who kept making edits directly from the Capitol without even using a VPN.

Ah yes, you were personally insulted and now discredit the biggest collection of knowledge the world has ever had. Fuck you, you fool.

WRONG. You are thinking of the Quran 🙏🏻🤲🏻

No it doesn’t

This is a very low-quality reply. Try making more high-quality replies to contribute to discussions here on Lemmy. Thanks!

I don’t care what that is, you post too much on here. I’m blocking and supplanting you.

Supplanting?

You’ll have to use smaller words for me, boss.

Try not making shit up

FWIW,

wp:Talk:Napalm#Burns_under_water?

wp:Talk:Burn/Archive 1#Burn pain

This page was last edited on 20 February 2024, at 12:50 (UTC).

Wales defended his comments in response to backlash from supporters of Gamergate, saying that “it isn’t about what I believe. Gg is famous for harassment. Stop and think about why.”[125]

…

Wales labeled himself a libertarian, qualifying his remark by referring to the Libertarian Party as “lunatics”, and citing “freedom, liberty, basically individual rights, that idea of dealing with other people in a manner that is not initiating force against them” as his guiding principles.[10] In a 2014 tweet, he expressed support for open borders.[104]

…

Wales has lived in London since 2012,[146] and became a British citizen in 2019.[147]

Yeah endless waves of attempted edits to tone down war crimes is the tip of the iceberg, a subject I will circle back to in the coming days. This is a bizarre comment on several levels. So is he less right-wing because he is British? It doesn’t matter if you enjoy particular sects of Ayn Rand worshippers, it makes no difference to me. The Gamergate business is the typical damage control response you see out of any site owner amenable to Nazis. Exactly like reddit admins. “We don’t take sides against the right-wing ☝️🤓 they broke site rules fair and square.” Typical bs. Nerds think everyone else is as singlemindedly gullible as them, invariably. Unfortunately the legal system demands people adhere to it

growing up I got taught by teachers not trust Wiki bc of misinformation. times have changed

Nope, we all misunderstood what they meant. Wikipedia is not an authoritative source, it is a derivative work. However, you can use the sources provided by the Wikipedia article and use the article itself to understand the topic.

Wikipedia isn’t and was never a primary source of information, and that is by design. You don’t declare information in encyclopedias, you inventory information.

Wikipedia was not then what it is now. You’re spot on with all that, spot on, but in the early days it wasn’t nearly as trustworthy.

Fair enough, I’m not old enough to remember those days of Wikipedia, my memory starts in roughly 2010 wrt Wikipedia use 😅

You can check old versions of any article by clicking ‘history’. And yeah, the standards used to be pretty low.

“Nope” to what exactly? you regurgitated what I said - but told us how you misunderstood it

Now in some states, you can’t trust teachers not to be giving you misinformation.

We homeschool our daughter. Saw a cool history through film course that taught with an example movie every week to grow interest… nothing in the itinerary said they’d play a video of Columbus by PragerU. They refused the refund, as it was 2 weeks in, and said it was used to foment conversation, but no other video was being offered or no questions were prepared to challenge the children. I worded my letter to call out the facts about Columbus vs the video, and the lack of accreditation of the source. I tried not to be the “lib”, but I very much got the gist that’s their opinion of me, and how they brushed me off. That fucking site is a plague on common sense, decency, and truth. Still fired up, and it was last month. We pulled her out of the course immediately after the video.

I can’t imagine homeschooling. Not that I think it’s bad but that it has to be so hard to do. And harder still to do it right.

Glad you pulled out of that course. PragerU is hot garbage and I hate how my autocorrect apparently knows PragerU and didn’t try to change it to something else.

How hard do you find it to homeschool? How many hours do you reckon it takes a day?

You’ve gotta keep in mind that in a regular school your kid is one of 20-30 for the teacher and they are lucky if they get five minutes of individual help/instruction. Everything else is just lecture, reading, and assignments.

It doesn’t have to be onerous. We homeschooled until around 3rd grade. Even so, the other kids they are in school with are academically… not stellar. My youngest (13) has a reading disability and she struggles to pass classes. She still frequently finds herself helping out other students because they are even worse off.

I’m not anti-public education, but whether it’s Covid or just republicans gutting the system, public education is in a state right now. I figure funding needs to increase by 30-50%. Kids need more resources than they are getting. And until they do, homeschooling isn’t an unreasonable option. But it’s not for everyone, of course. One parent has to work (or not) from home or odd hours.

I’m not exactly qualified to speak on the issue, but I think it’s also important to focus on where the money gets spent. Anecdotally it seems like a lot is spent on classroom tech (“smart boards”, Chromebooks, iPads), which while nice, has abysmal value in terms of returns on cost.

Personally, I think the most important things are basic supplies, school lunches, and teacher salaries.

If this is only 6 weeks ago now then you can still most likely do a credit card charge back if you paid that way

Not to trust wiki as a format? Or did you mean Wikipedia specifically?

subject at hand was wikipedia, but it applies to any wiki format I guess - just check sources.

deleted by creator

Who would’ve thought??

How ironic that school teachers spent decades lecturing us about not trusting Wikipedia… and now, the vast majority of them seem to rely on Youtube and ChatGPT for their lesson plans. Lmao

Unfortunately the current head of Wikipedia is pro-AI which has contributed to this lack of trust.

One thing I don’t get: why the fuck LLM’s don’t use wikipedia as a source of info? Would help them coming up with less bullshit. I experimented around with some, even perplexity that searches the web and gives you links, but it always has shit sources like reddit or SEO optimized nameless news sites

Perplexity is okay with more academic topics at the least, albeit pretty shallow (usually isn’t that different to google). There might be a policy not to include encyclopedias, but it would be an improvement over SEO garbage for sure.

Yeah, I use it instead of search, as that has gone to shit years ago due to all the SEO garbage, and now it’s even worst with AI generated SEO garbage.

At least this way I get fast results, and mostly accurate on the high level. But I agree that if I try to go deeper, it just makes up stuff based on 9 yrs old reddit posts.

I wish somebody built an AI model that prioritized trusted data, like encyclopedias, wiki, vetted publication, prestige news portals. It would be much more useful, and could put Google out of business. Unfortunately, Perplexity is not that

It’s not that AI don’t or cannot use Wikipedia they do actually, but AI can’t properly create a reliable statement in general. It halucinates so goddamn much, and that can never, ever, be solved, because it is at the end of the day just arranging tokens based on statistical approximation of things people might say. It has been proven that modern LLMs can never approach even close to human accuracy with infinite power and resources.

That said, if an AI is blocked from using Wikipedia then that would be because the company realized Wikipedia is way more useful than their dumb chatbot.

Will cut the AI results out of your google searches by switching the browser’s default to the web api…

I cannot tell you how much I love it.

Or better yet, ditch Google altogether.

I switched to Startpage, an EU-based search engine.

Not EU based, and not free, but I’ve been loving Kagi.

Seconding Kagi, it’s worth every penny.

Well, I’m glad. That being said, Startpage is a search engine located in the Netherlands that you can start using now. Just go to the site. Kagi is paid.

Yes! Startpage rocks hard. I wish Safari would let it be the default search engine (FF does with a simple plug in).

Startpage! No shit. Used to be Ixquick, and I used that for years. Great site - thank you for reminding me it’s still there. :)

I’ve been using startpage, but doesn’t it still rely on google results somehow?

FWIW, wp:Startpage.

For Firefox on Android (which TenBlueLinks doesn’t have listed) add a new search engine and use these settings:

Name: Google Web

Search string URL: https://www.google.com/search?q=%s&udm=14

as @Saltarello@lemmy.world learned before I did, strip the number 25 from the string above so it looks more like this:www .google.com/search?q=%s&udm=14Edit: Lemmy/Voyager formats this string with 25 at the end. Remove the 25 & save it as a browser search engineEDIT: There’s got to a Markdown option for disabling markdwon auto-formatting links, right?? The escape backslash seems to not be working for this specifically.EDIT II: Found a nasty hack that does the trick!

https[]()://www.example.com/search?q=%sappears as:

Lemmy also does code markup with `text`

https://www.google.com/search?q=%25s&udm=14Indeed, @SnotFlickerman@lemmy.blahaj.zone, like so!:

Edit, oh is it buggy with parameters per downthread? Interesting

Thank you.

Oh thank you I’ve been looking for this

I would like to say Google is still better at finding search results with more than one word. For example, if somebody searches “santa claus porn” then DuckDuckGo or Ecosia will probably return images of porn or images of santa claus instead of images of santa claus porn.

However that is no longer true either, because google search continues to get worse all the time. So it’s like there isn’t any good search engines anymore.

Yeah switching search links will help but it’s a band-aid. AI has stolen literally everyone’s work without any attempt at consent or remuneration and the reason is now your search is 100 times faster, comes back with exactly something you can copy & paste and you never have to dig through links or bat away confirmation boxes to find out it doesn’t have what you need.

It’s straight up smash-n-grab. And it’s going to work. Just like everybody and their grandma gave up all their personal information to facebook so will your searches be done through AI.

The answer is to regulate the bejesus out of AI and ensure they haven’t stolen anything. That answer was rendered moot by electing trump.

I don’t know about you, but my results have been wrong or outdated at least a quarter of the time. If you flip two coins and both are heads, your information is outright useless. What’s the point in looking something up to maybe find the right answer? We’re entering a new dark age, and I hate it.

I’ve been asking a bunch of next-to-obvious questions about things that don’t really matter and it’s been pretty good. It still confidently lies when it gives instructions but a fair amount of time it does what I asked it for.

I’d prefer to not have it, because it’s ethically putrid. But it converts currency and weights and translates things as well as expected and in half the time i’d spend doing it manually. Plus I kind of hope using it puts them out of business. It’s not like I’d pay for it.

I refuse to believe that it’s in any way better or faster at unit and currency conversion than plain Google or DuckDuckGo. Literally type “100 EUR to USD” and you’ll get an almost instant answer. Same with units: “100 feet to meters”.

And if you’re using it, you’re helping their business. It’s as simple as that.

100%. Unit conversion is a solved problem, and it is impossible for an AI to be faster or more accurate than any of the existing converters.

I do not need an AI calculator, because I have no desire to need to double check my calculator.

Well spotted. I retract my notion that unit conversion was convenient. Clearly I should have switched to another tab to do the thing that is solved.

Removed by mod

But it converts currency and weights and translates things as well as expected and in half the time i’d spend doing it manually

So does qalc, and it can also do arithmetic and basic calculus quickly and (gasp) correctly!

Curious what and how you’re prompting. I get solid results, but I’m only asking for hard facts, nothing that could have opinion or agenda inserted. Also, I never go past the first prompt. There be dragons that way.

Niche history and mineralogy topics. Just looking for threads to tug. I found that it offered me threads but they often did not lead anywhere relevant or outright did not exist. Which is fine, but kinda removes my need for AI. If I have a general purpose question, I check certain websites. I already know how to serve myself everyday information. AI’s just not helpful for my use case.

Overall, It’s time neutral. But it raises my blood pressure when it hallucinates, and dying of a stroke is undesirable for me.

I eat out and lately overhearing some people in other tables talking about how they find shit with ChatGPT, and it’s not a good sign.

They stopped doing research as it used to be for about 30 years.

I was chatting with some folks the other day and somebody was going on about how they had gotten asymptomatic long-COVID from the vaccine. When asked about her sources her response was that AI had pointed her to studies and you could viscerally feel everybody else’s cringe.

asymptomatic long-COVID

The hell even is that? Asymptomatic means no symptoms. Long-COVID isn’t a contagious thing, it’s literally a description of the symptoms you have from having COVID and the long term effects.

God that makes my freaking blood boil.

Damn @BigBenis@lemmy.world that was a hell of a conversation you we having.

“Cool, send me the actual studies.”

*crickets*

Assuming this AI shit doesn’t kill us all and we make it to the conclusion that robots writing lies on websites perhaps isn’t the best thing for the internet, there’s gonna be a giant hole of like 10 years where you just shouldn’t trust anything written online. Someone’s gonna make a bespoke search engine that automatically excludes searching for anything from 2023 to 2035.

I can’t really fault them for it tbh. Google has gotten so fucking bad over the last 10 years. Half of the results are just ads that don’t necesarily have anything to do with your search.

Sure, use something else like Duckduckgo, but when you’re already switching, why not switch to something that tends to be right 95% of the time, and where you don’t need to be good at keywords, and can just write a paragraph of text and it’ll figure out what you’re looking for. If you’re actually researching something you’re bound to look at the sources anyway, instead of just what the LLM writes.

The ease of access of LLMs, and the complete and utter enshittyfication of Google is why so many people choose an LLM.

I believe DuckDuckGo is just as bad. I think they changed their search to match Google. I’m not sure if you are allowed to exclude search terms, use quotes, etc.

I had a song intermittently stuck in my head for over a decade, couldn’t remember the artist, song name, or any of the lyrics. I only had the genre, language it was in, and a vague, memory-degraded description of a music video. Over the years I’d tried to find it on search engines a bunch of times to no avail, using every prompt I could think of. ChatGPT got it in one. So yeah, it’s very useful for stuff like that. Was a great feeling to scratch that itch after so long. But I wouldn’t trust an LLM with anything important.

LLM are good at certain things, especially involving language (unsurprisingly). They’re tools. They’re not the be-all-end-all like a lot of tech bros proselytize them as, but they are useful if you know their limitations

If you use them properly, they can be a valuable addition to one’s search for information. The problem is that I don’t think most users use them properly.

They stopped doing research as it used to be for about 30 years.

Was it really “like that” for any length of time? To me it seems like most people just believed whatever bullshit they saw on Facebook/Twitter/Insta/Reddit, otherwise it wouldn’t make sense to have so many bots pushing political content there. Before the internet it would be reading some random book/magazine you found, and before then it was hearsay from a relative.

I think that the people who did the research will continue doing the research. It doesn’t matter if it’s thru a library, or a search engine, or Wikipedia sources, or AI sources. As long as you know how to read the actual source, compare it with other (probably contradictory) information, and synthesize a conclusion for yourself, you’ll be fine; if you didn’t want to do that it was always easy to stumble upon misinfo or disinfo anyways.

One actual problem that AI might cause is if the actual scientists doing the research start using it without due diligence. People are definitely using LLMs to help them write/structure the papers ¹. This alone would probably be fine, but if they actually use it to “help” with methodology or other content… Then we would indeed be in trouble, given how confidently incorrect LLM output can be.

I think that the people who did the research will continue doing the research.

Yes, but that number is getting smaller. Where I live, most households rarely have a full bookshelf, and instead nearly every member of the family has a “smart” phone; they’ll grab the chance to use anything that would be easier than spending hours going through a lot of books. I do sincerely hope methods of doing good research are still continually being taught, including the ability to distinguish good information from bad.

Internet (via your smartphone) provides you with the ability to find any book, magazine or paper on any subject you want, for free (if you’re willing to sail under the right flag), within seconds. Of course noone has a full bookshelf anymore, the only reason to want physical books nowadays is sentimentality or some very specific old book that hasn’t been digitized yet (but in that case you won’t have it on your bookshelf and will have to go to the library anyway). The fastest and most accurate way of doing research today is getting a gist on Wikipedia, clicking through the source links and reading those, and combing through arxiv and scihub for anything relevant. If you are unfamiliar with the subject as a whole, you download the relevant book and read it. Of course noone wants to comb through physical books anymore, it’s a complete waste of time (provided of course they have been digitized).

If this AI stuff weren’t a bubble and the companies dumping billions into it were capable of any long term planning they’d call up wikipedia and say “how much do you need? we’ll write you a cheque”

They’re trying to figure out nefarious ways of getting data from people and wikipedia literally has people doing work to try to create high quality data for a relatively small amount of money that’s very valuable to these AI companies.

But nah, they’ll just shove AI into everything blow the equivalent of Wikipedia’s annual budget in a week on just electricity to shove unwanted AI slop into people’s faces.

Because they already ate through every piece of content on wikipedia years and years ago. They’re at the stage where they’ve trawled nearly the entire internet and are running out of content to find.

So now the AI trawls other AI slop, so it’s essentially getting inbred. So they literally need you to subscribe to their AI slop so they can get new data directly from you because we’re still nowhere near AGI.

But nah, they’ll just shove AI into everything blow the equivalent of Wikipedia’s annual budget in a week on just electricity to shove unwanted AI slop into people’s faces.

You’re off my several order of magnitude unfortunately. Tech giants are spending the equivalent of the entire fucking Apollo program on various AI investments every year at this point.

(pasting a Mastodon post I wrote few days ago on StackOverflow but IMHO applies to Wikipedia too)

"AI, as in the current LLM hype, is not just pointless but rather harmful epistemologically speaking.

It’s a big word so let me unpack the idea with 1 example :

- StackOverflow, or SO for shot.

So SO is cratering in popularity. Maybe it’s related to LLM craze, maybe not but in practice, less and less people is using SO.

SO is basically a software developer social network that goes like this :

- hey I have this problem, I tried this and it didn’t work, what can I do?

- well (sometimes condescendingly) it works like this so that worked for me and here is why

then people discuss via comments, answers, vote, etc until, hopefully the most appropriate (which does not mean “correct”) answer rises to the top.

The next person with the same, or similar enough, problem gets to try right away what might work.

SO is very efficient in that sense but sometimes the tone itself can be negative, even toxic.

Sometimes the person asking did not bother search much, sometimes they clearly have no grasp of the problem, so replies can be terse, if not worst.

Yet the content itself is often correct in the sense that it does solve the problem.

So SO in a way is the pinnacle of “technically right” yet being an ass about it.

Meanwhile what if you could get roughly the same mapping between a problem and its solution but in a nice, even sycophantic, matter?

Of course the switch will happen.

That’s nice, right?.. right?!

It is. For a bit.

It’s actually REALLY nice.

Until the “thing” you “discuss” with maybe KPI is keeping you engaged (as its owner get paid per interaction) regardless of how usable (let’s not even say true or correct) its answer is.

That’s a deep problem because that thing does not learn.

It has no learning capability. It’s not just “a bit slow” or “dumb” but rather it does not learn, at all.

It gets updated with a new dataset, fine tuned, etc… but there is no action that leads to invalidation of a hypothesis generated a novel one that then … setup a safe environment to test within (that’s basically what learning is).

So… you sit there until the LLM gets updated but… with that? Now that less and less people bother updating your source (namely SO) how is your “thing” going to lean, sorry to get updated, without new contributions?

Now if we step back not at the individual level but at the collective level we can see how short-termist the whole endeavor is.

Yes, it might help some, even a lot, of people to “vile code” sorry I mean “vibe code”, their way out of a problem, but if :

- they, the individual

- it, the model

- we, society, do not contribute back to the dataset to upgrade from…

well I guess we are going faster right now, for some, but overall we will inexorably slow down.

So yes epistemologically we are slowing down, if not worst.

Anyway, I’m back on SO, trying to actually understand a problem. Trying to actually learn from my “bad” situation and rather than randomly try the statistically most likely solution, genuinely understand WHY I got there in the first place.

I’ll share my answer back on SO hoping to help other.

Don’t just “use” a tool, think, genuinely, it’s not just fun, it’s also liberating.

Literally.

Don’t give away your autonomy for a quick fix, you’ll get stuck."

originally on https://mastodon.pirateparty.be/@utopiah/115315866570543792

I honestly think that LLM will result in no progress made ever in computer science.

Most past inventions and improvements were made because of necessity of how sucky computers are and how unpleasant it is to work with them (we call it “abstraction layers”). And it was mostly done on company’s dime.

Now companies will prefer to produce slop (even more) because it will hope to automate slop production.

As an expert in my engineering field I would agree. LLMs has been a great tool for my job in being better at technical writing or getting over the hump of coding something every now and then. That’s where I see the future for ChatGPT/AI LLMs; providing a tool that can help people broaden their skills.

There is no future for the expertise in fields and the depth of understanding that would be required to make progress in any field unless specifically trained and guided. I do not trust it with anything that is highly advanced or technical as I feel I start to teach it.

Most importantly, the pipeline from finding a question on SO that you also have, to answering that question after doing some more research is now completely derailed because if you ask an AI a question and it doesn’t have a good answer you have no way to contribute your eventual solution to the problem.

Maybe SO should run everyone’s answers through a LLM and revoke any points a person gets for a condescending answer even if accepted.

Give a warning and suggestions to better meet community guidelines.

It can be very toxic there.

Edit: I love the downvotes here. OP - AI is going to destroy the sources of truth and knowledge, in part because people stopped going to those sources because people were toxic at the sources. People: But I’ll downvote suggestions that could maybe reduce toxicity, while having no actual impact on the answers given.

I guess I’m a bit old school, I still love Wikipedia.

I use Wikipedia when I want to know stuff. I use chatGPT when I need quick information about something that’s not necessarily super critical.

It’s also much better at looking up stuff than Google. Which is amazing, because it’s pretty bad. Google has become absolute garbage.

deleted by creator

To get a decent result on Google, you have to wade through 2 pages of ads, 4 pages of sponsored content, and maybe the first good result is on page 10.

ChatGPT does a good job at filtering most of the bullshit.

I know enough to not just accept any shit from the internet at face value.

deleted by creator

Why the fuck are you defending google so hard lmao.

Google will absolutely put bad information front and center too.

And by using Google you make Google richer. In fact you get served far more ads using Google products than chatGPT.

What’s your fucking point lmao.

Why the fuck are you defending google so hard lmao.

Ah yes, when I said “use a different search engine” as a solution to Google having issues I’m certainly defending Google! What an endorsement right? “Use a completely different service” is free publicity for Google!

Other search engines are even worse than Google lmao. Brave consistently provide literally the worst results. Duck duck go same.

Are you actually serious.

I think you missed a part of their comment:

Block ads and use a different search engine?

Both Ecosia and DuckDuckGo have served me pretty well. Kagi also seems somewhat interesting.

Ecosia is working with Qwant on their own index, the first version of which has already gone online I believe. So they’re no longer exclusively relying on Bing/Google for their back-end.I have yet to use an alternate search engine for any length of time (and i’ve tried a few) and think “ah yes, this was the kind of results I expected from my search”, they’re systematically worse than google, which is an incredible achievement, considering how absolute garbage google is nowadays.

Brave, which i’m using now, is atrocious with that. The amount of irrelevant bullshit it throws at you before getting to the stuff you are actually looking for is actually incredible.

Yep, that an occasionally Wiktionary, Wikidata, and even Rationalwiki.

You’re right bro but I feel comfortable searching the old fashioned way!

Same but with Encyclopedia Brittanica

AI will inevitably kill all the sources of actual information. Then all we’re going to be left with is the fuzzy learned version of information plus a heap of hallucinations.

What a time to be alive.

AI just cuts pastes from the websites like Wikipedia. The problem is when it gets information that’s old or from a sketchy source. Hopefully people will still know how to check sources, should probably be taught in schools. Who’s the author, how olds the article, is it a reputable website, is there a bias. I know I’m missing some pieces

You replied to OP while somehow missing the entire point of what he said lol

Do be fair I didn’t read the article

That’s not ‘being fair’ that’s just you admitting you’d rather hear your own blathering voice than do any real work.

To be fair calling that work is stretching it

Much of the time, AI paraphrases, because it is generating plausible sentences not quoting factual material. Rarely do I see direct quotes that don’t involve some form of editorialising or restating of information, but perhaps I’m just not asking those sorts of questions much.

Man, we hardly did that shit 20 years ago. Ain’t no way the kids doing that now.

At best they’ll probably prompt AI into validating if the text is legit

Unfortunately, it’s gonna get bad before it gets worse.

Well that’s kind of reassu… oh

I’ve been meaning to donate to those guys.

I use their site frequently. I love it, and it can’t be cheap to keep that stuff online.

“With fewer visits to Wikipedia, fewer volunteers may grow and enrich the content, and fewer individual donors may support this work.”

I understand the donors aspect, but I don’t think anyone who is satisfied with AI slop would bother to improve wiki articles anyway.

The idea that there’s a certain type of person that’s immune to a social tide is not very sound, in my opinion. If more people use genAI, they may teach people who could have been editors in later years to use genAI instead.

That’s a good point, scary to think that there are people growing up now for whom LLMs are the default way of accessing knowledge.

Eh, people said the exact same thing about Wikipedia in the early 2000’s. A group of randos on the internet is going to “crowd source” truth? Absurd! And the answer to that was always, “You can check the source to make sure it says what they say it says.” If you’re still checking Wikipedia sources, then you’re going to check the sources AI provides as well. All that changes about the process is how you get the list of primary sources. I don’t mind AI as a method of finding sources.

The greater issue is that people rarely check primary sources. And even when they do, the general level of education needed to read and understand those sources is a somewhat high bar. And the even greater issue is that AI-generated half-truths are currently mucking up primary sources. Add to that intentional falsehoods from governments and corporations, and it already seems significantly more difficult to get to the real data on anything post-2020.

But Wikipedia actually is crowd sourced data verification. Every AI prompt response is made up on the fly and there’s no way to audit what other people are seeing for accuracy.

Hey! An excuse to quote my namesake.

Hackworth got all the news that was appropriate to his situation in life, plus a few optional services: the latest from his favorite cartoonists and columnists around the world; the clippings on various peculiar crackpot subjects forwarded to him by his father […] A gentleman of higher rank and more far-reaching responsibilities would probably get different information written in a different way, and the top stratum of New Chuasan actually got the Times on paper, printed out by a big antique press […] Now nanotechnology had made nearly anything possible, and so the cultural role in deciding what should be done with it had become far more important than imagining what could be done with it. One of the insights of the Victorian Revivial was that it was not necessarily a good thing for everyone to read a completely different newspaper in the morning; so the higher one rose in society, the more similar one’s Times became to one’s peers’. - The Diamond Age by Neal Stephenson (1995)

That is to say, I agree that everyone getting different answers is an issue, and it’s been a growing problem for decades. AI’s turbo-charged it, for sure. If I want, I can just have it yes-man me all day long.

Alternative for DuckDuckGo:

https://noai.duckduckgo.com/?q=%25s

Edit: Lemmy/Voyager formats this string with 25 at the end. Remove the 25 & save it as a browser search engine

Using backticks can help

https://noai.duckduckgo.com/?qedit: How odd, the equal sign disappears

Not me. I value Wikipedia content over AI slop.

I asked a chatbot scenarios for AI wiping out humanity and the most believable one is where it makes humans so dependent and infantilized on it that we just eventually die out.

So we get the Wall-e future…

Mudd explains that he broke out of prison, stole a spaceship, crashed on this planet, and was taken in by the androids. He says they are accommodating, but refuse to let him go unless he provides them with other humans to serve and study. Mudd informs Kirk that he and his crew are to serve this purpose and can expect to spend the rest of their lives there.

Tbh, I’d say that’s not a bad scenario all in all, and much more preferably than scenarios with world war, epidemics, starvation etc.

because people are just reading AI summarized explanation of your searches, many of them are derived from blogs and they cant be verified from an official source.

Or the ai search just rips off Wikipedia.