https://damrnelson.github.io/github-historical-uptime/

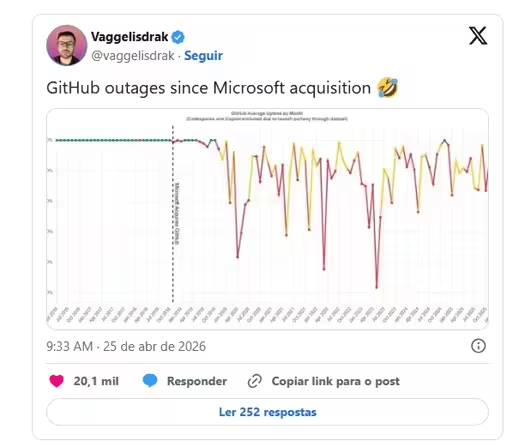

A lot of this is GitHub Actions alone, but a lot of it isn’t. I also don’t know how well GitHub tracked outages before the Microsoft acquisition. It’s entirely possible the graph looks so bad because they only took outage tracking seriously after being acquired. I don’t know.

That’s just fucking disgraceful.

Is that real? Because that… Makes it real clear…

Nothing to make a point like snipping off the y-axis scaling.

I hate Microslop like any person with > 2 brain cells, but that graph is useless - all visible y-entries end in a 0 - might as well be 99.990, 99.980, 99.970, …

It’s just Xitter’s image viewer cropping it automatically; the original upload has it.

It is still bad practice to select a narrow window from a axis like this and show the difference that seems massive relative to what is shown but isn’t that significant when we can see the relation to the whole.

Graph 101

Lies! 89.98% has two nines in it!

I see two nines

Microsoft never promised where the nines would be

0.99%

Clearly you are not an llm then

Thank you, that is much more helpful than OP graph

I’m stupid what does zero nines uptime mean?

When contracting a service, usually there are clauses that specify that it needs to be fully working and available x% of time, and compensation may be due in case this goal isn’t met.

Let’s say GitHub was down for 1 full day in the last year, that’s 99.7% availability. That’s “2 nines”, but sometimes people might say “2 nines five”, meaning “better than 99.5% uptime”.

I’d say that the expectation for a high availability service nowadays is “5 nines”: 99.999% uptime. That’s around 5 minutes of downtime in a full year. This kind of performance from a site like GitHub is just unacceptable…

These services measure their uptimes in number of nines, the more the better.

Sometimes the humorous term “nine fives” (55.5555555%) is used to contrast with “five nines” (99.999%),[18][19][20] though this is not an actual goal….

Maybe Microsoft misunderstood the assignment, and thought this was a goal. At their current rate, it’s certainly more achievable than the more traditional “five nines”.

As an aside, I love how the following is preferences as “casual”, and then the author starts arguing semantics:

Similarly, percentages ending in a 5 have conventional names, traditionally the number of nines, then “five”, so 99.95% is “three nines five”, abbreviated 3N5.[13][14] This is casually referred to as “three and a half nines”,[15] but this is incorrect….

the author starts arguing semantics

Legendary levels of pedantry, gave me a real good chuckle 🤭

Ah makes sense. Thanks

Move slow and break shit

It’s the best of both worsts.

Obv a gross looking chart, but I am bothered that the left hand scale is trimmed off. I expect those are 10% increments, but wouldn’t be shocked if Original was like 99.0, 98.0, 97.0, etc.

You’d be surprised: https://damrnelson.github.io/github-historical-uptime/

But weirdly enough it feels much worse using gh professionally than the scale makes it seem.

The graph is neat.

Saving some people a click: the cut-off y scale in the OP image is in 0.1% increments. So the lowest point is a little above 99.5%

Thank you! I was thinking “it can’t just be me that’s bothered”

How does this corrospond with growth? I imagine having 100% uptime is much harder the bigger a platform is, so did Github grow a lot in the same period?

I’m not questioning wether or not Microsoft has issues, I just find it relevant wether or not they very suddenly saw a 2000% increase in server usage or something.

I imagine having 100% uptime is much harder the bigger a platform is, so did Github grow a lot in the same period?

its not there are scale points where once you hit a critical number you need to re-architect your backend. 1k,10k,1mil, etc. usually these vary based on your app. but they’re usually exponential so once you hit the higher levels it takes much longer to reach the next level.

on top of that you usually by the higher tiers have proper backpressure and signals being sent to the frontend systems to dynamically manage the load generated. so suddenly uptime is much easier.

when you see large repeated failures like this the cause is almost always corporate causing issues.

- reducing engineering budget.

- not listening to engineering department on product decisions. (see the recent product manager AI generated commit that got merged and caused a mild uproar of 'co authored by copilot)

- rushing nonsense out before its ready.

it this particular case i bet it cutting engineering head count and increase AI slop generated code without proper review by engineers. which ive been hearing a lot more from my engineering friends.